Not a Tour. A Generative Experience. What Replaces Product Tours in the AI Era.

Mickey Alon

Not a Tour. A Generative Experience. What Replaces Product Tours in the AI Era.

Product tours don't work. The industry built a $2B category around them anyway.

I've spent 20+ years building SaaS products, including co-founding Gainsight PX, a product experience platform that lived squarely in the digital adoption space.

I've seen the product tour model from the inside. Tours solve the wrong problem. They teach users to navigate complexity instead of eliminating it. The replacement isn't a better tour. It's a generative experience — an AI Operator that executes the work tours only described.

Users don't fail because they lack instructions. They fail because the gap between intent and action is too wide. And that gap is different for every user at every moment.

The Product Tour Was a Band-Aid, Not a Solution

Product tours were invented to compensate for unintuitive software. They were never meant to create great user experiences.

They were a patch. A way to narrate complexity instead of eliminating it.

The logic was sound in 2012: new user signs up, doesn't know where anything is, so you walk them through the key features step by step.

The problem is that this logic assumed every user needed the same information in the same order. In modern B2B SaaS, where personas, roles, and goals vary wildly within a single product, that assumption is indefensible.

The rise of PLG made it worse. Product-led growth gave millions of self-serve users direct access, each arriving with different intent, different context, and different urgency. A marketing manager exploring your analytics dashboard has nothing in common with a developer configuring your API. A single linear tour cannot serve both. It doesn't even try.

Multi-step product tours complete at roughly 5%. That figure is consistent across Userpilot, Tandem, and Intercom community data. The steepest drop-off happens at steps 3–4, exactly where the workflow requires real work rather than passive clicking. Tooltip click-through rates drop over 70% after the first session. And Pendo's 2024 Product Adoption Benchmark found that features highlighted in tours were used by only 18% of new users within 30 days. Being shown something is not the same as doing it.

Product tours don't fail because they're poorly designed. They fail because they solve the wrong problem. They assume users want to learn. Users want to do.

Why Digital Adoption Platforms Became a $2B Dead End

WalkMe, Whatfix, Pendo Guides, Appcues. These tools defined the digital adoption platform category. They made sense for the 2015 problem: enterprise software was complex, training was expensive, and overlaying step-by-step guidance on top of the UI was a genuine improvement over PDF manuals and classroom sessions.

But the category hit a structural ceiling it can't grow past.

Legacy DAPs are overlay layers. They sit on top of your product but have no understanding of user intent, context, or application state.

Every flow is manually authored. Every user segment is a guess. Every UI change risks breaking existing tours. The system scales linearly with product complexity, which means it doesn't scale at all.

The maintenance tax alone is brutal. Engineering teams spend 4–6 hours per release cycle fixing onboarding flows that broke when the design team shipped a UI update. For products on continuous delivery cycles, that's every sprint. Everest Group research shows DAP implementations average 3–6 months to full deployment. The cost-to-value ratio breaks down fast outside the enterprise, where dedicated DAP administrators can maintain the system.

When SAP acquired WalkMe for $1.5B in mid-2024, it signaled consolidation, not innovation. WalkMe's future is as an SAP feature, not a standalone category leader.

The deeper issue is what these tools measure.

DAPs optimize for "did the user click the right button," not "did the user achieve their goal." Tour completion rate looks good in a quarterly review. It doesn't correlate with activation, retention, or expansion revenue.

Forrester found that 70% of digital transformation initiatives stall due to user adoption failures. That's the exact problem DAPs were built to solve. The fact that adoption still fails at that rate, after a decade of DAP investment, is the verdict.

The Mechanism: Why Static Guidance Fails in Dynamic Products

The failure of product tours and legacy DAPs isn't a design problem. It's a structural mismatch.

Modern B2B products are not linear. They are state-rich, role-dependent, and context-sensitive. A product tour is a railroad track laid across a landscape that keeps shifting. The moment your product adds a feature, changes a layout, or serves a new persona, every pre-authored flow becomes a little less accurate. Multiply that across dozens of flows and monthly releases, and you get a system that's perpetually out of date.

Nielsen Norman Group research shows that user task-completion paths diverge 3–5x from designer-intended paths within the first five minutes of a session. No tour builder has enough branching logic to cover that.

This is the onboarding cold start problem, borrowed from recommendation systems. You have the least data about a user at the exact moment you need the most guidance for them. Product tours try to solve this with assumptions. The right solution solves it with inference.

The real onboarding problem is a real-time AI problem: what does this specific user need right now, given what they've done, what they haven't done, and what they're trying to accomplish? A pre-authored tooltip can't answer that question. An agent can.

The New Expectation: Users Trained by Consumer AI

There's a compounding force that makes the product tour's failure irreversible.

Every user who has typed a question into ChatGPT or watched an AI generate a document from a prompt has internalized a new expectation: software should understand what I want and handle it.

This expectation didn't exist when product tours were designed. In 2015, the baseline assumption was "I will learn this product over time." Users accepted a learning curve because there was no alternative. That tolerance is gone.

When a user opens your B2B product for the first time and encounters a five-step tooltip sequence, they're not comparing it to your competitor's tooltip sequence. They're comparing it to the last time they told an AI what they wanted and it just did it. Against that expectation, the product tour doesn't just underperform. It feels like a step backward.

This is why incremental improvements to tours can't close the gap. Better targeting, smarter segmentation, A/B tested copy. None of it matters. The gap isn't between your tour and a better tour. It's between your tour and a fundamentally different interaction model.

Enter the Generative Experience: From Guidance to Execution

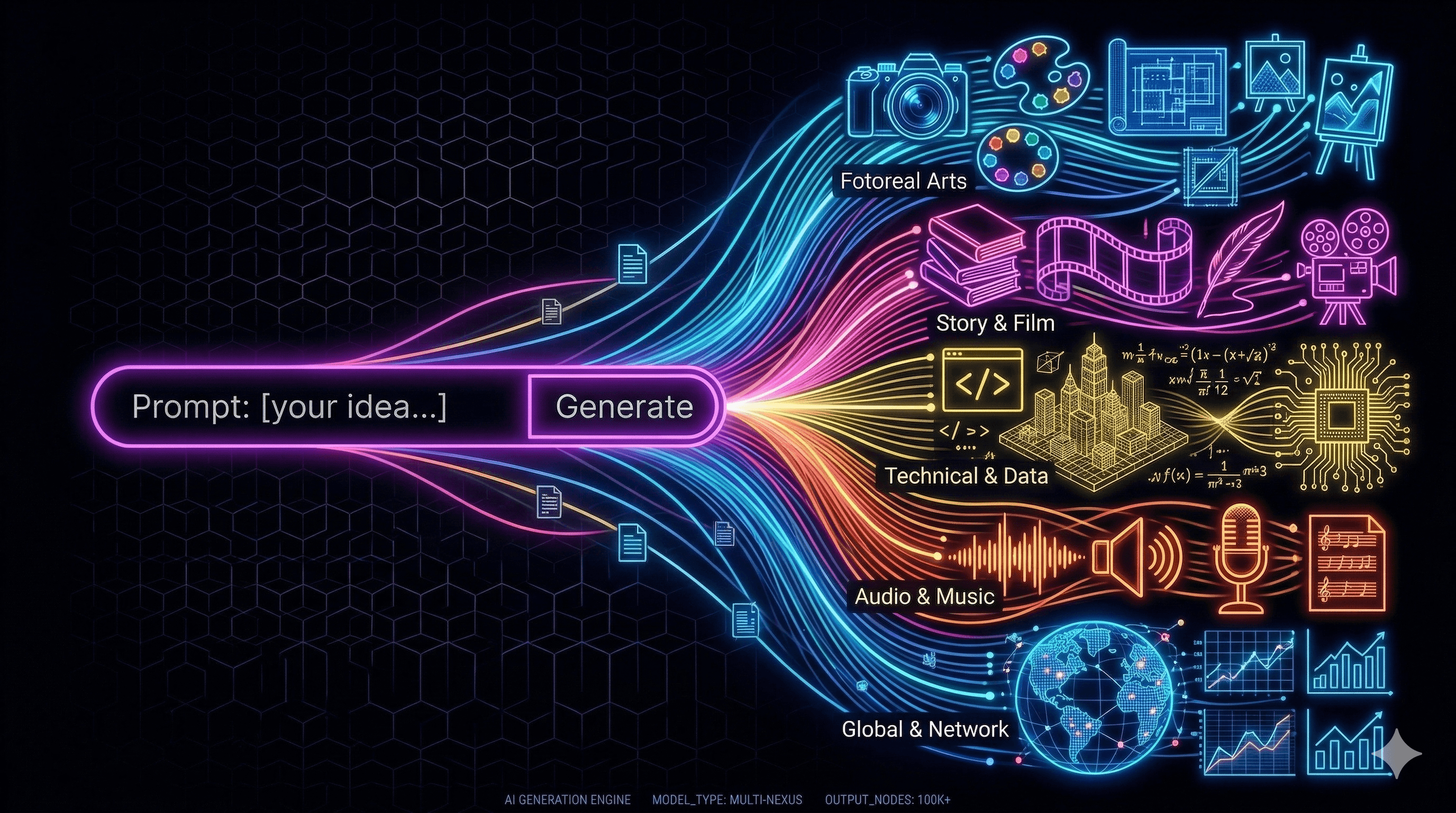

A generative experience is an interface paradigm where an AI agent embedded in the product observes user behavior, infers intent, and takes autonomous action on the user's behalf — guiding, assisting, or completing tasks entirely.

This is not a chatbot bolted onto a help center. It's not a copilot that suggests next steps. It's an AI Operator that executes.

The distinction matters:

A product tour says: "Click here, then here, then here."

A chatbot says: "You might want to try the reporting module."

An AI Operator says: "I see you're trying to build a churn report. I've created one based on your data. Want me to walk you through the results?"

The tour narrates friction. The chatbot describes friction. The operator eliminates it.

Subscribe to the Foldspace Blog

Receive new blog posts in your inbox.

Share on social:

Stay in touch

Subscribe to the Foldspace Blog

Stay connected with Foldspace and receive new blog posts in your inbox.